Introduction

In 1998 Martin Roesch created network intrusion detection system (NIDS) that had the ability to perform real-time traffic analysis and packet logging on Internet Protocol (IP) networks[1]. The intrusion detection system was released under free software license (GNU General Public License version 2) that guaranteed end users the freedoms to use, study, share (copy) and, what is most important, modify the software. Nowadays new versions of Snort are being developed by Sourcefire Incorporation in tandem with the Snort open source community and claimed to be the most widely deployed intrusion detection and prevention technology worldwide. Since that, it is essential to perform independent evaluation of Snort NIDS aimed to detect all possible weaknesses together with overall detection efficiency.

Since that time numerous amount of researches aimed to evaluate the ability of Snort to detect intrusions were carried out. The datasets for Snort evaluation included publicly available datasets such as DARPA, KDD’99 and DEFCON. Considering the fact that DEFCON dataset is unlabeled and contains only intrusive traffic, the results of evaluation using this dataset alone are not comprehensive. As regards DARPA dataset, it is fully synthetic and does not contain any realistic traffic which suggests that results obtained using this dataset might considerably vary from results obtained in realistic environment. Other publicly available datasets are heavily anonimized or do not reflect current trends what makes NIDS evaluation using them impracticable.

In order to avoid disadvantages of publicly available datasets, the systematic approach to generate dataset that is modifiable, extensible and reproducible was developed by information security centre of excellence (ISCX) at University of New Brunswick. This approach is based on the concept of profiles that contain detailed descriptions of intrusions and abstract distribution models for applications, protocols, or lower level network entitles[2]. The profiles were then employed in an experiment to generate the desirable dataset in a testbed environment, resulted in publicly available ISCX 2012 dataset that was selected to perform Snort evaluation at this work.

Methodology

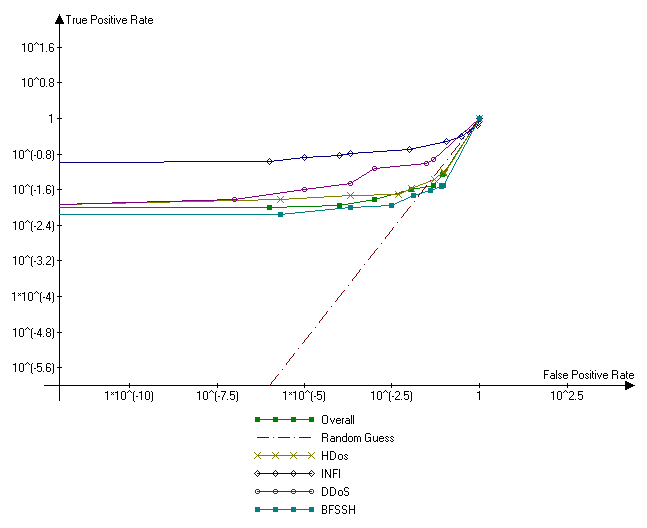

When algorithm in intrusion detection system evaluates network packet or connection, it computes a float value from 0 to 1 that characterizes the probability of intrusion in this network connection. The threshold that distincts an intrusion from regular network connection is called operating point. A NIDS’s receiver operating characteristic (ROC) curve is a two-dimensional depiction of the accuracy of intrusion detection that describes the relationship between the two operating parameters of the NIDS: its probability of detection and its false alarm probability. That is, the ROC curve shows the probability of detection provided by NIDS at a given false alarm probability and vise versa[3]. Two dimensions are required to show the whole story of how the true-positive rate of detection decreases as the false-positive rate of error increases. The ROC curve thus summarizes the performance of NIDS. The area under the curve is equal to the probability that a classifier will rank a randomly chosen positive instance higher than a randomly chosen negative one. The main advantage of the evaluation using area under the ROC curve is that it allows to evaluate the NIDS for all existent operating points. To compute the area under the ROC curve at this work Wilcoxon signed-rank test was used, that is equal to the area under the curve[4].

ISCX 2012 intrusion detection dataset consists of labeled network traces, including full packet payload in pcap format is publicly availible to selected research under the terms of academic license agreement. The dataset was generated in a testbed network architecture for seven days, where 21 interconneted Windows workstations were emulated. Three days out of seven did not contain any malicious activity and due to the fact that every day is provided in separate file, the data of these days can be used for training purposes in case of using machine learning algorithms for intrusion detection. Since Snort supports pcap files as an input, it can be easily evaluated with ISCX2012 dataset without extra convertation efforts.

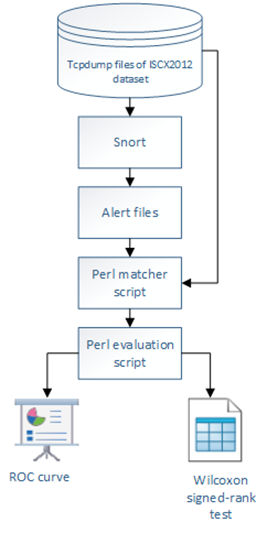

Figure 1 illustrates the process used in this work to run Snort against the ISCX 2012 dataset, culminating in the generation of ROC curves. It should be considered that Snort keeps the results of intrusion detection process in the alert files. To measure the probability of detection and false alarm probability, the Perl matcher script was developed. A match was defined as the same Internet Protocol addresses and port numbers or ICMP codes/types. The timestamp was not included due to the difference between the time labeled in the dataset and the time Snort reported about, what resulted in numerous mismatches. While excluding the time could allow an alert and an attack to be matched erroneously, this is highly unlikely and did not occur in the large amount of data that was checked manually.

Figure 1. Basic process flow for Snort evaluation methodology based on ISCX 2012 dataset.

Finally, the results came to perl evaluation script that generated the ROC curve and computed Wilcoxon signed-rank test of Snort. By taking the list of attacks correlated with Snort alerts, the script calculated the true positive rate for each rule on the given set of attacks, and divided it by the false positive rate for that rule. The script constructed an overall ROC curve (as a table of coordinates) as well as ROC curves for all categories of attacks represented in ISCX2012 dataset: Infiltration the network from inside (INFI), HTTP Denial of Service (HDoS), Distributed Denial of Service using an IRC Botnet (DDoS) and Brute Force SSH (BFSSH). Then script calculated an overall Wilcoxon signed-rank test as well as Wilcoxon signed-rank test for all categories of attacks represented in ISCX2012 dataset. To plot ROC curve a free tool named Advanced grapher (Agrapher) was used. This information is presented in the following part of this article.

Results

The results are presented on Figure 2. A visual examination of the overall receiver operating characteristic curve shows three distinct segments: those operational points which have an excellent detection rate with barely any false positives resulting the initial spike, followed by a small climb for the large number of operational points that provided some detections with many false positives and finally the extrapolation from the last operational point to (1,1).

As regards to attack categories, the smallest Wilcoxon signed-rank test value for Snort equals to 0.633 (for Brute Force SSH attack) that is not sufficient to report that Snort performed well in detection of this attack category. Table 1 shows the results of Wilcoxon signed-rank test calculation.

Table 1. Wilcoxon signed-rank test values for different categories of attacks

| Attack category | Overall | INFI | HDoS | DDoS | BFSSH |

| Wilcoxon signed-rank test | 0,726 | 0,913 | 0,749 | 0,742 | 0,633 |

Figure 2. Snort ROC curves for all categories of attacks represented in ISCX2012 dataset

Conclusion

While the initial assumption was that Snort would perform well on the ISCX2012 dataset, the results showed the opposite. Analyzing the results it was found that the dataset only includes a very small number of attacks that are detectable with the fixed signature. While Snort has some capacity to detect attacks like denial of service and brute force SSH, such detections have not been the primary focus of its design.

In conclusion, the results demonstrated that Snort should not be used alone in networks that can be attacked with zero-day attacks. It can be improved by machine learning algorithms embedded, or used in parallel-functioning NIDS with centralized decision-making subsystem.

References

- Roesch M. et al. Snort: Lightweight Intrusion Detection for Networks //LISA. – 1999. – Т. 99. – С. 229-238.

- Ali Shiravi, Hadi Shiravi, Mahbod Tavallaee, Ali A. Ghorbani, Toward developing a systematic approach to generate benchmark datasets for intrusion detection, Computers & Security, Volume 31, Issue 3, May 2012, Pages 357-374

- Maxion R. A., Roberts R. R. Proper use of ROC curves in Intrusion/Anomaly Detection. – University of Newcastle upon Tyne, Computing Science, 2004.

- Mason, Simon J.; Graham, Nicholas E. (2002). “Areas beneath the relative operating characteristics (ROC) and relative operating levels (ROL) curves: Statistical significance and interpretation”. Quarterly Journal of the Royal Meteorological Society (128): 2145–2166.